Video creation used to require expensive equipment, a full production team, and weeks of editing. Not anymore.

AI video generators have changed everything. Now, anyone can create professional-quality videos in minutes — just from a text prompt, an image, or a script.

But here’s the problem: there are dozens of tools out there. Some are genuinely impressive. Others are overhyped and underdelivered.

So which ones actually work?

We tested over 20 AI video tools — generators, editors, and avatar creators — to find out. Whether you’re a marketer, content creator, filmmaker, or total beginner, this guide will help you find the right tool for your exact needs.

Here’s what we cover:

- The best AI video generators for text-to-video creation

- The best AI editors for polishing your footage

- The best avatar and template-based tools for business videos

How We Tested and Evaluated These Tools

Finding the best AI video generator isn’t as simple as picking the most popular one. We spent 200+ hours hands-on testing to give you real, unbiased results.

Here’s exactly how we did it.

Our Testing Process

We signed up for each tool individually. We tested free plans, paid tiers, and trial versions. Every tool was evaluated on the same set of tasks:

- Generating video from a text prompt

- Converting a script or blog post into video

- Editing and enhancing existing footage

- Creating AI avatars and voiceovers

This kept our comparisons fair and consistent across every platform.

What We Looked For

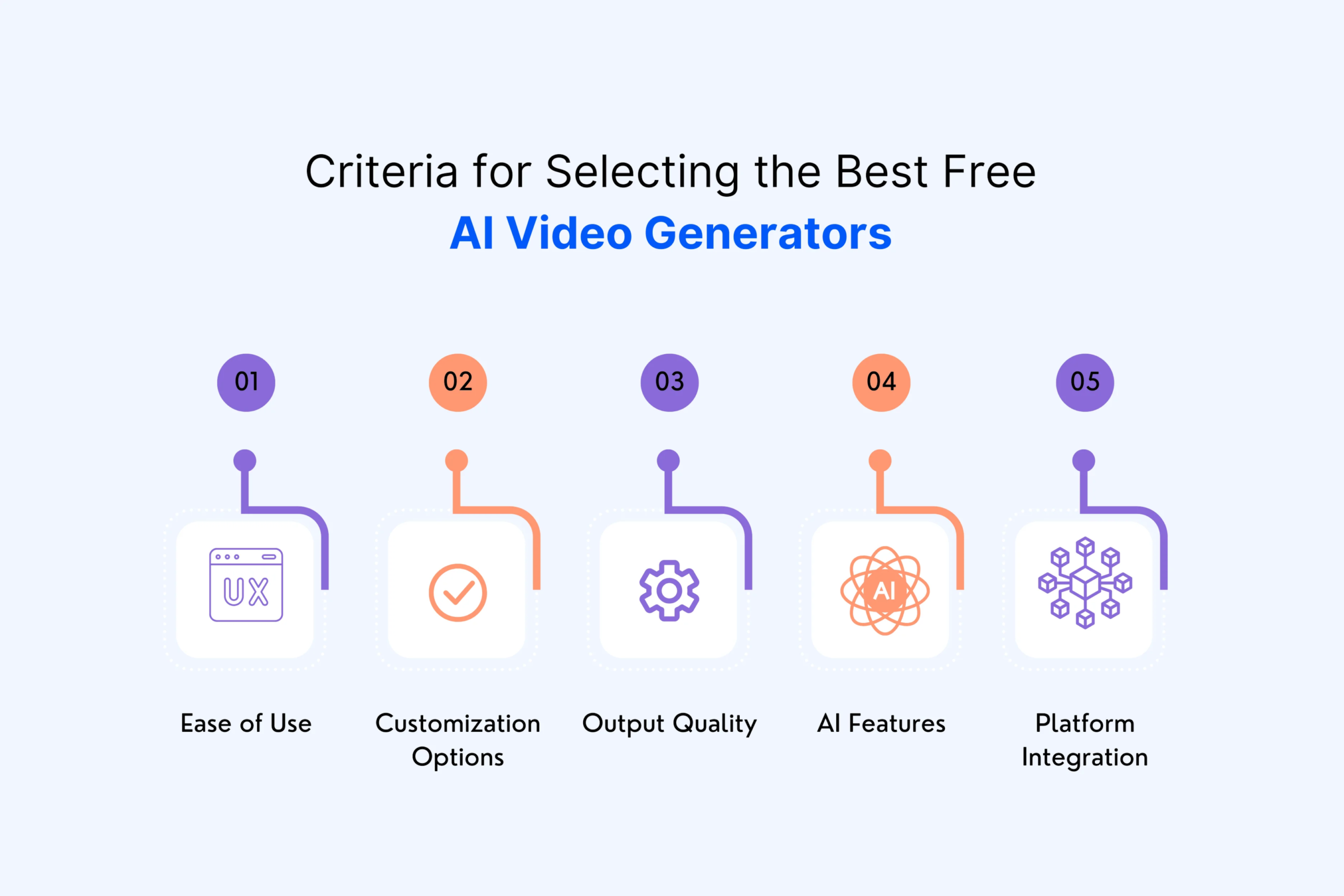

Not every tool is built for the same purpose. A filmmaker needs different features than a social media marketer. So we judged each tool against six core criteria:

1. Output Quality Does the video actually look good? We checked resolution, motion smoothness, lighting, and how realistic the results appeared.

2. Prompt Accuracy Does the tool deliver what you ask for? We tested simple prompts and complex, detailed ones to see how well each tool followed instructions.

3. Ease of Use Can a beginner figure it out quickly? We timed how long it took to go from sign-up to first video output.

4. Speed How long does generation actually take? Some tools kept us waiting minutes. Others delivered in seconds.

5. Pricing and Value Is the free plan usable? Are paid tiers worth the cost? We broke down exactly what you get at each price point.

6. Consistency Could the tool produce reliable results across multiple attempts? Or did quality vary wildly from one generation to the next?

Who Did the Testing?

Our team includes content creators, video editors, and marketers — people who use video tools professionally every day. We didn’t just run one test and move on. Each tool was tested multiple times across different use cases and prompt styles.

What We Did Not Consider

We ignored marketing claims entirely. A tool claiming to be “the world’s most advanced AI video platform” means nothing without proof. We judged purely on real-world performance.

We also excluded tools that were:

- Still in very early beta with unstable outputs

- Discontinued or no longer actively maintained

- Lacking clear pricing or accessibility for regular users

What Makes the Best AI Video Generator?

With so many tools available, it’s easy to get overwhelmed. Every platform claims to be the best. But what actually separates a great AI video generator from an average one?

Here are the factors that truly matter.

1. Output Quality That Holds Up

The most important thing is simple — does the video look good?

Great AI video generators produce output that is:

- Sharp and high resolution — at least 1080p, ideally 4K

- Smooth in motion — no jittery or unnatural movement

- Consistent in style — characters and scenes don’t randomly change mid-video

- Realistic in lighting and texture — details that make footage feel professional

Low-quality output is a dealbreaker, no matter how many features a tool offers.

2. Prompt Understanding and Accuracy

A good AI video generator should understand what you actually want. You shouldn’t need to write a perfectly engineered prompt just to get a decent result.

The best tools can:

- Follow both simple and complex instructions accurately

- Maintain context throughout longer video sequences

- Translate creative or abstract ideas into compelling visuals

If a tool consistently misses the mark on your prompts, it will slow down your workflow rather than speed it up.

3. Ease of Use

Not everyone using AI video tools is a professional filmmaker. The best platforms are accessible to beginners while still offering depth for advanced users.

Look for:

- A clean, intuitive interface

- Clear guidance on how to get started

- Fast time-to-first-video — ideally under five minutes

- Helpful templates or presets for common use cases

A powerful tool that takes weeks to learn defeats the purpose of using AI in the first place.

4. Generation Speed

Speed matters more than people realize. When you’re iterating on ideas or working to a deadline, waiting ten minutes per generation is frustrating and inefficient.

The best tools strike a balance between:

- Fast generation times without sacrificing quality

- Real-time or near-real-time previews where possible

- Efficient rendering even for longer video clips

5. Consistency Across Outputs

This is where many AI video tools still struggle. Generating one impressive video is easy. Generating ten consistently impressive videos is much harder.

Consistency means:

- Characters look the same from scene to scene

- Visual style doesn’t shift unexpectedly

- Results are predictable and repeatable across sessions

For marketers and businesses especially, inconsistency kills trust in a tool very quickly.

6. Customization and Creative Control

The best AI video generators don’t just do everything for you — they give you meaningful control over the output.

This includes:

- Adjusting camera angles, motion, and pacing

- Uploading reference images or style guides

- Fine-tuning voiceovers, captions, and music

- Choosing between different visual styles or aspect ratios

More control means more creative freedom — and better final results.

7. Pricing and Value for Money

A great tool at an unaffordable price isn’t practical for most users. We looked at each tool’s pricing structure carefully, asking:

- Is the free plan genuinely usable or just a teaser?

- Do paid plans offer fair value relative to output quality?

- Are there hidden limits on generations, exports, or resolution?

The best tools offer transparent, flexible pricing that suits different budgets and use cases.

8. Commercial Usage Rights

This one is often overlooked — but it’s critical for businesses and professional creators.

Before using any AI-generated video commercially, you need to know:

- Do you own the content you generate?

- Is the output cleared for commercial use?

- Are there watermarks on free or lower-tier plans?

Tools like Adobe Firefly are built specifically with commercially safe outputs in mind. Others have restrictions buried deep in their terms of service. Always check before publishing.

9. Platform Availability

Where can you actually use the tool? Some platforms are web-only. Others offer mobile apps or desktop software.

Consider your workflow:

- Do you need to edit on the go from a mobile device?

- Are you working in a team that needs collaboration features?

- Do you need integration with other tools like Premiere Pro or Google Drive?

The best tool is the one that fits seamlessly into how you already work.

Best AI Video Generators at a Glance (Comparison Table)

Before diving deep into individual tool reviews, here’s a quick overview of every tool we tested. This comparison table gives you a snapshot of each platform’s strengths, pricing, and ideal use case — so you can quickly narrow down your options.

Quick Comparison Table

| Tool | Best For | Free Plan | Starting Price | Output Quality | Ease of Use |

| Google Veo | Reliable, consistent results | Yes (limited) | ~$20/month | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Runway | Film-making and creative control | Yes | $15/month | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Sora | Turning stories into video | No | $20/month | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Luma Dream Machine | Brainstorming and ideation | Yes | $29.99/month | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| LTX Studio | Extreme creative control | Yes (limited) | $19/month | ⭐⭐⭐⭐ | ⭐⭐⭐ |

| Adobe Firefly | Commercially safe outputs | Yes (limited) | $9.99/month | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| InVideo AI | Social media and marketing videos | Yes | $25/month | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Synthesia | AI avatars and corporate videos | No | $29/month | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| HeyGen | AI avatars and presentations | Yes (limited) | $29/month | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Descript | Editing video by editing script | Yes | $24/month | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Wondershare Filmora | Polishing video with AI tools | No | $19.99/month | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| VEED | Faster content production | Yes | $18/month | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| OpusClip | Extracting viral clips | Yes (limited) | $19/month | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Kling | Visual realism | Yes (limited) | $10/month | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Pika Labs | Character consistency | Yes | $8/month | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Hailuo MiniMax | Prompt adherence | Yes (limited) | $15/month | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Capsule | Branded video workflows | No | $35/month | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Pictory | Transforming content into video | Yes (limited) | $23/month | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

How to Read This Table

Free Plan refers to whether the tool offers a genuinely usable free tier — not just a short trial. Tools marked “limited” offer free access but with significant restrictions on resolution, exports, or generation credits.

Starting Price reflects the lowest paid tier at the time of writing. Prices may vary based on region or promotional offers.

Output Quality and Ease of Use ratings are based on our hands-on testing across multiple use cases — not marketing claims.

Key Patterns We Noticed

Going through all these tools, a few clear patterns emerged.

Top-tier generators are pulling ahead fast. Google Veo, Runway, and Sora are in a league of their own when it comes to raw video quality. The gap between these and mid-tier tools is growing — not shrinking.

Avatar tools are becoming more accessible. Synthesia and HeyGen have both improved significantly. Creating a realistic AI presenter no longer requires an enterprise budget.

Free plans are getting stingier. As demand grows, many platforms are reducing what their free tiers offer. If you rely heavily on a free plan, expect limitations on resolution, watermarks, or monthly generation credits.

Ease of use and quality don’t always go together. The most powerful tools — like LTX Studio and Runway — have steeper learning curves. The easiest tools — like InVideo and Pictory — sacrifice some output quality for simplicity.

Which Category Do You Fall Into?

Not sure where to start? Here’s a quick guide:

You’re a content creator or social media manager → Start with InVideo AI or OpusClip. Fast, simple, and built for high-volume content production.

You’re a filmmaker or creative professional → Look at Runway, Google Veo, or Sora. These offer the highest output quality and the most creative control.

You’re a business or marketer → Synthesia, HeyGen, or Adobe Firefly are your best bets. Professional outputs, commercially safe content, and easy brand customization.

You’re a beginner on a tight budget → Try Luma Dream Machine or Pika Labs. Both offer solid free plans and genuinely beginner-friendly interfaces.

You work with long-form video content → OpusClip or Descript will save you the most time. Both are designed specifically for repurposing and editing existing footage efficiently.

Best for Reliable, Consistent Results — Google Veo

Platform: Web Starting Price: ~$20/month Free Plan: Yes (limited via Google Labs) Best For: Creators and professionals who need high-quality, predictable video output every single time

What Is Google Veo?

Google Veo is Google’s flagship AI video generation model. It represents years of research and development from one of the most powerful AI teams in the world.

The current iteration — Veo 3 — is widely considered the most capable text-to-video model available in 2026. It doesn’t just generate visually impressive footage. It generates footage that is consistently impressive — across different prompts, styles, and complexity levels.

That consistency is what sets it apart.

First Impressions

Getting started with Veo is straightforward. Access is available through Google DeepMind’s VideoFX tool and increasingly through Google’s Gemini platform.

The interface is clean and minimal. There’s no overwhelming dashboard or complicated settings to navigate. You type your prompt, adjust a few basic parameters, and hit generate.

Within a minute or two — sometimes faster — your video is ready.

The first time we generated a video with Veo, the result was genuinely surprising. Not because it was perfect — but because it was so much closer to what we described than any other tool we tested.

What We Tested

We put Veo through a wide range of prompts across different styles and complexity levels:

- A cinematic drone shot over a mountain range at golden hour

- A photorealistic close-up of raindrops falling on a wet city street

- An animated character walking through a stylized fantasy forest

- A fast-paced product showcase video for a pair of running shoes

- A slow-motion ocean wave crashing against dark coastal rocks

Across every single test, Veo delivered output that was polished, detailed, and remarkably close to what we asked for.

Standout Features

Exceptional Prompt Understanding

Veo understands nuanced, detailed prompts better than almost any competitor. You can describe lighting conditions, camera movements, mood, and visual style — and Veo will actually attempt to replicate all of it. Most tools pick up on two or three elements of a complex prompt. Veo picks up on nearly all of them.

Cinematic Motion Quality

Motion in Veo-generated videos feels natural and intentional. Camera movements are smooth. Subject movement doesn’t stutter or glitch. This is one of the most common failure points in AI video generation — and Veo handles it better than anyone else right now.

High Resolution Output

Veo generates video at up to 4K resolution, which is significantly higher than many competitors. For professional use cases — presentations, advertisements, broadcast content — this matters enormously.

Style Versatility

Whether you need photorealistic footage, animated content, or something with a specific cinematic aesthetic, Veo handles it all. It doesn’t excel at just one visual style. It excels across many — which is rare.

Strong Temporal Consistency

Characters and objects maintain their appearance throughout a video clip. This sounds basic — but many AI video tools still struggle with it. Veo keeps things coherent from the first frame to the last.

Where It Falls Short

No tool is perfect. Here’s where Veo still has room to improve.

Access is still limited. Veo’s most powerful features aren’t fully available to everyone yet. Availability through Google Labs and Gemini is expanding — but full public access remains somewhat restricted compared to competitors like Runway or Pika.

Pricing lacks transparency. Google’s pricing structure for Veo is less straightforward than competing platforms. Costs can vary depending on how you access the model and how many generations you run.

Limited editing tools. Veo is a generator — not an editor. Once your video is generated, you’ll need a separate tool to add captions, music, transitions, or other post-production elements.

Short clip length. Generated clips are currently limited in duration. For longer video projects, you’ll need to stitch multiple generations together — which adds time and effort to your workflow.

Pricing Breakdown

| Plan | Price | What You Get |

| Free (Google Labs) | $0 | Limited generations, watermarked output |

| Gemini Advanced | ~$20/month | Access to Veo via Gemini platform |

| API Access | Usage-based | Pay per second of generated video |

Pricing is expected to become more structured and accessible as Veo moves out of its limited release phase throughout 2026.

Who Is Google Veo Best For?

Veo is the right choice if:

- Output quality is your top priority and you won’t settle for anything less than the best

- You need consistent results across multiple generations for a professional project

- You’re creating cinematic or high-production content for advertising, film, or broadcast

- You work with detailed, complex prompts and need a tool that actually follows them

It’s probably not the right choice if:

- You need a free tool with generous limits for casual experimentation

- You want an all-in-one platform that includes editing, avatars, and templates

- You’re a complete beginner who needs heavy guidance and built-in tutorials

Best for Film-Making and Creative Control — Runway

Platform: Web, iOS Starting Price: $15/month Free Plan: Yes (125 one-time credits) Best For: Filmmakers, creative directors, and video professionals who want maximum control over their AI-generated footage

What Is Runway?

Runway is one of the oldest and most respected names in AI video generation. While newer tools have grabbed headlines, Runway has quietly been building something more important — a complete creative ecosystem for professional video makers.

It’s not just a text-to-video generator. It’s a full suite of AI-powered filmmaking tools wrapped inside one platform. And in 2026, it remains the go-to choice for serious creative professionals.

The latest model — Gen-3 Alpha — represents a significant leap forward in cinematic quality, motion realism, and directorial control. If you’ve ever wanted to direct a scene the way a real filmmaker would — choosing shots, controlling camera movement, setting the mood — Runway is built exactly for that.

First Impressions

Runway’s interface feels different from most AI tools. It doesn’t feel like a consumer app. It feels like professional creative software — which is entirely intentional.

The dashboard gives you access to a wide range of tools immediately. Text-to-video, image-to-video, video-to-video, motion brush, camera controls — it’s all there from day one.

For a complete beginner, this can feel slightly overwhelming at first. But spend thirty minutes exploring the platform and the logic behind it becomes clear. Everything is designed around one goal — giving you directorial control over your AI-generated content.

What We Tested

We pushed Runway through a demanding set of creative scenarios:

- A noir-style detective scene with dramatic shadows and rain

- A sweeping aerial shot transitioning into a ground-level street scene

- A slow dolly shot moving through an abandoned library

- Converting a still photograph into a cinematic moving scene

- Applying a specific visual style to existing raw footage

Runway handled every single one of these with impressive results — particularly the camera movement tests, where it outperformed every other tool we tried.

Standout Features

Advanced Camera Controls

This is Runway’s single biggest differentiator. You can specify exact camera movements — dolly in, dolly out, pan left, tilt up, orbit, zoom — with a level of precision that no other AI video tool currently matches.

For filmmakers, this is transformative. You’re not just generating video. You’re directing it.

Motion Brush

The Motion Brush tool lets you paint motion onto specific parts of an image or video frame. Want the trees to sway while the character stays still? Want the water to ripple while the background remains static? Motion Brush makes it possible — and it works remarkably well.

Video-to-Video Transformation

Upload your own footage and Runway can apply an entirely new visual style to it. Turn raw smartphone footage into something that looks like a big-budget film. This feature alone makes Runway invaluable for indie filmmakers working with limited production budgets.

Act One — Character Performance Capture

One of Runway’s newer and most exciting features. Act One allows you to use a simple webcam recording to drive the facial expressions and movements of an AI-generated character. The result is surprisingly convincing character animation without any expensive motion capture equipment.

Multi-Scene Project Management

Unlike most AI video generators that produce isolated clips, Runway lets you build and manage multi-scene projects within the platform. This makes it far more practical for longer, more structured video productions.

Extensive Style Library

Runway offers a deep library of visual styles — from specific film aesthetics to animation styles to abstract looks. You can apply these to new generations or to existing footage, giving you enormous stylistic range without needing deep technical knowledge.

Where It Falls Short

Runway is genuinely impressive — but it has some real limitations worth knowing about.

The free plan is very restrictive. 125 one-time credits sounds reasonable until you realize a single generation can consume multiple credits. The free tier is really only enough to evaluate the tool — not to use it productively.

It has a learning curve. The depth of Runway’s feature set is both its greatest strength and its biggest challenge for new users. Beginners may feel lost initially and need time to get comfortable with the platform.

Generation speed can be slow. High-quality outputs — especially longer clips with complex camera movements — can take several minutes to render. When you’re iterating quickly on creative ideas, this adds up.

Clip length limitations. Even on paid plans, individual clip lengths are capped. Longer video productions require stitching multiple clips together, which demands additional effort in post-production.

Pricing gets expensive at scale. While the entry price is reasonable, heavy users who need large volumes of high-quality generations will find costs climbing quickly on higher-tier plans.

Pricing Breakdown

| Plan | Price | What You Get |

| Free | $0 | 125 one-time credits, watermarked exports |

| Standard | $15/month | 625 credits/month, no watermark, 5-second clips |

| Pro | $35/month | 2,250 credits/month, longer clips, faster generation |

| Unlimited | $95/month | Unlimited standard generations, priority access |

| Enterprise | Custom | Custom credits, dedicated support, team features |

Real-World Use Cases

Runway genuinely shines in specific professional scenarios:

Independent filmmakers use it to pre-visualize scenes before expensive live shoots — saving significant time and production budget.

Music video directors use the style transfer and motion tools to create visually distinctive content that would be impossible or prohibitively expensive to shoot practically.

Advertising agencies use Runway’s multi-scene project tools to rapidly prototype video concepts for client approval before committing to full production.

Content creators use the image-to-video and video transformation features to elevate the production quality of their existing footage without learning complex editing software.

Who Is Runway Best For?

Runway is the right choice if:

- You think like a filmmaker and want directorial control over camera, motion, and style

- You’re working on creative or artistic video projects where visual distinctiveness matters

- You need to transform existing footage into something with higher production value

- You’re building multi-scene video projects that require consistency across clips

- You want the most feature-rich AI video platform available today

It’s probably not the right choice if:

- You’re a complete beginner looking for the simplest possible tool

- You need high-volume, fast-turnaround video generation on a tight budget

- You want an all-in-one tool that includes avatars, templates, and social media formatting

Best for Turning Stories into Video — Sora

Platform: Web, Android, iOS Starting Price: $20/month Free Plan: No Best For: Storytellers, writers, and creative professionals who want to transform narratives into visually compelling video content

What Is Sora?

Sora is OpenAI’s text-to-video model — and when it launched, it genuinely shocked the internet.

The demo videos were unlike anything anyone had seen from an AI system. Long, coherent, cinematic sequences with complex scene transitions, realistic physics, and stunning visual detail. For many people, it was the moment AI video generation stopped feeling like a novelty and started feeling like a genuine creative medium.

In 2026, Sora has matured significantly from those early demos. It’s now a fully accessible platform available to ChatGPT Plus and Pro subscribers — and it remains one of the most powerful tools available for anyone whose primary goal is translating a story, narrative, or script into compelling visual content.

First Impressions

Sora’s interface is clean, focused, and deliberately simple. There are no overwhelming dashboards or complicated toolsets to navigate.

You write your prompt. You set a few basic parameters — duration, aspect ratio, resolution. And you generate.

That simplicity is intentional. Sora is built around the idea that language is the primary creative input. The more vivid and detailed your written description, the better your output will be. It rewards good storytelling instincts more than technical knowledge.

For writers and narrative-driven creators, this feels immediately natural. For users who prefer visual, hands-on creative tools, it may feel slightly limiting at first.

What We Tested

We designed our Sora tests specifically around narrative and storytelling scenarios:

- A two-scene story of a astronaut discovering an abandoned space station

- A children’s fairy tale sequence following a fox through an enchanted forest

- A dramatic courtroom scene with multiple characters and emotional tension

- A historical recreation of a busy Victorian-era London street

- A brand story video following a product from factory floor to customer hands

Sora’s performance across these narrative-driven tests was consistently excellent. Scene coherence, character continuity, and emotional atmosphere were noticeably stronger than competing tools on story-based prompts.

Standout Features

Unmatched Scene Coherence

This is where Sora truly separates itself from the competition. Most AI video tools struggle to maintain visual consistency across a scene — characters change appearance, lighting shifts randomly, objects appear and disappear. Sora keeps everything coherent and consistent throughout a clip in a way that feels closer to real cinematography than AI generation.

Deep Narrative Understanding

Sora doesn’t just respond to keywords. It understands context, mood, and narrative arc. Describe a scene as tense and foreboding — and Sora will choose darker lighting, slower camera movement, and more deliberate pacing. This understanding of storytelling language is what makes it uniquely suited for narrative content.

Storyboard Mode

One of Sora’s most practical features for storytellers. Storyboard Mode lets you input multiple sequential prompts and generate a series of connected scenes that flow together logically. It’s the closest any AI video tool currently comes to producing a coherent multi-scene narrative automatically.

Realistic Physics and World Simulation

Sora was specifically trained to understand how the physical world works. Water moves realistically. Fabric responds to motion naturally. Objects interact with their environment in ways that feel grounded and believable. For storytelling content — where believability is everything — this matters enormously.

Style and Aesthetic Flexibility

From photorealistic live-action to stylized animation to vintage film aesthetics — Sora handles an impressive range of visual styles. Writers can match the visual tone of their content to the emotional register of their story without switching between multiple tools.

High Resolution and Long Duration

Sora supports video generation up to 1080p resolution and clips of up to 60 seconds in length — significantly longer than many competing tools. For narrative content, longer clip duration is a meaningful advantage.

Remix and Variation Tools

Not happy with your first generation? Sora’s Remix feature lets you take an existing generation and iterate on it — adjusting specific elements while keeping the overall scene intact. This iterative workflow is genuinely useful for refining narrative sequences without starting from scratch every time.

Where It Falls Short

Sora is impressive — but it has clear limitations that are worth understanding before committing.

No free plan. Unlike Runway or Luma Dream Machine, Sora requires a paid ChatGPT subscription to access. There’s no way to try it without spending money first — which is a real barrier for casual users or those on tight budgets.

Limited camera control. Compared to Runway’s sophisticated camera movement tools, Sora offers relatively limited directorial control over camera behavior. You can describe camera movements in your prompt — but you can’t control them with the same precision.

Generation speed varies. During peak usage times, Sora’s generation speed can slow down noticeably. For a tool used by millions of ChatGPT subscribers simultaneously, server load is a real and ongoing challenge.

No built-in editing suite. Like Google Veo, Sora is a generator rather than an editor. Generated clips need to be taken into a separate tool for post-production work — adding steps to your overall workflow.

Occasional physics errors. Despite its strong physics simulation, Sora still occasionally produces results where object behavior or human movement looks slightly unnatural. It’s less common than with competing tools — but it still happens.

Content restrictions. As an OpenAI product, Sora operates under strict content policies. Some creative prompts — particularly those involving violence, mature themes, or real public figures — will be declined. For filmmakers working with edgier narrative content, this can be a frustrating limitation.

Pricing Breakdown

| Plan | Price | What You Get |

| ChatGPT Plus | $20/month | Access to Sora, limited generations per month, 480p resolution |

| ChatGPT Pro | $200/month | Priority access, higher resolution, significantly more generations |

| API Access | Usage-based | Pay per second of generated video, higher resolution options |

It’s worth noting that Sora access is bundled with ChatGPT subscriptions — meaning you’re also paying for access to GPT-4o and other OpenAI tools. If you’re already a ChatGPT Plus subscriber, Sora comes included at no extra cost.

Real-World Use Cases

Sora’s narrative strengths make it particularly valuable in specific creative contexts:

Screenwriters and filmmakers use Sora to rapidly visualize script scenes before committing to production. What used to require a storyboard artist and days of work can now be done in hours.

Children’s content creators use Sora’s ability to generate warm, coherent, character-consistent animation sequences for educational and entertainment content.

Brand storytellers use Sora to produce emotionally compelling brand narrative videos that feel more like short films than traditional advertising.

Game developers use Sora to generate cinematic cutscene concepts and world-building visual references during early development stages.

Educators and documentary makers use Sora’s historical recreation capabilities to bring written accounts and archival descriptions to life visually.

Sora vs. Runway — Which Should You Choose?

These are the two most capable AI video tools available in 2026 — but they serve different creative needs.

| Sora | Runway | |

| Primary Strength | Narrative coherence and story-driven content | Cinematic camera control and filmmaker tools |

| Best Input | Detailed written prompts and scripts | Visual references and technical direction |

| Camera Control | Limited — prompt-based only | Advanced — precise directorial control |

| Free Plan | No | Yes (limited) |

| Ease of Use | Very easy | Moderate learning curve |

| Clip Length | Up to 60 seconds | Varies by plan |

Choose Sora if your primary creative input is language — if you think in stories, scripts, and narrative descriptions.

Choose Runway if your primary creative input is visual — if you think in shots, camera angles, and cinematic composition.

Who Is Sora Best For?

Sora is the right choice if:

- You’re a writer or storyteller who thinks primarily in narrative terms

- You want to visualize scripts or story concepts quickly and convincingly

- You need long, coherent video clips with strong scene consistency

- You’re already a ChatGPT subscriber and want to add powerful video generation to your workflow

- You’re creating emotional, narrative-driven content for brand storytelling, education, or entertainment

It’s probably not the right choice if:

- You need a free tool to experiment with before committing

- You want precise camera and motion control over your generated footage

- You need built-in editing, captioning, or post-production tools

- You’re working with content that may conflict with OpenAI’s content policies

Best for Social Media and Marketing Videos — InVideo AI

Platform: Web, iOS, Android Starting Price: $25/month Free Plan: Yes Best For: Marketers, social media managers, and content creators who need to produce high volumes of polished video content quickly and consistently

What Is InVideo AI?

InVideo AI is not trying to compete with Runway or Sora on cinematic quality. It’s playing an entirely different game — and winning it convincingly.

Where tools like Sora focus on narrative depth and Runway focuses on filmmaking precision, InVideo AI focuses on one thing above everything else: speed of production at scale.

It is purpose-built for the realities of modern content marketing. You need videos — lots of them — for Instagram, YouTube, TikTok, LinkedIn, Facebook, and everywhere else your audience lives. You need them to look professional. You need them fast. And you need them without hiring a full video production team.

That is exactly what InVideo AI delivers.

In 2026, InVideo AI has evolved from a template-based video maker into a genuinely intelligent AI-powered content engine. You describe what you want in plain language — and the platform builds a complete, ready-to-publish video around your brief.

First Impressions

The moment you land on InVideo AI’s dashboard, the difference from tools like Runway or LTX Studio is immediately apparent.

Everything here is designed for speed and accessibility. The interface is bright, welcoming, and logically organized. There are no complex settings to configure before you can start creating. Templates, stock footage, voiceovers, captions — everything is integrated and ready to go from the moment you sign up.

We had our first complete video generated and ready to download within eight minutes of creating an account. That’s including sign-up time.

For busy marketers and content teams, that kind of speed matters enormously.

What We Tested

We designed our InVideo AI tests around real-world marketing and social media scenarios:

- A 60-second Instagram Reel promoting a fictional fitness app

- A YouTube explainer video breaking down a complex financial concept

- A TikTok-style product showcase for a skincare brand

- A LinkedIn thought leadership video based on a blog post

- A series of five themed social media videos maintaining consistent branding across all five

- A news-style summary video generated from a text article

InVideo AI handled every single one of these tasks with impressive speed and competence. The branded series test was particularly impressive — maintaining visual consistency across five separate videos without any manual adjustment.

Standout Features

AI Script and Prompt Generation

You don’t even need to write a full script. Describe your video in a sentence or two — “Create a 60-second promotional video for a sustainable coffee brand targeting millennials” — and InVideo AI writes the script, selects relevant stock footage, adds voiceover, and assembles the complete video automatically.

The AI understands marketing language, audience targeting, and content goals in a way that genuinely accelerates the briefing-to-video workflow.

Massive Stock Media Library

InVideo AI integrates with one of the largest stock footage libraries available — over 16 million video clips, images, and audio tracks. Finding relevant, high-quality visuals for almost any topic or niche is rarely a problem.

The AI automatically selects appropriate stock footage based on your script and prompt — saving significant time compared to manually searching and selecting clips yourself.

AI Voiceover with Natural Voice Options

InVideo AI includes built-in AI voiceover generation with a wide selection of voices across multiple languages and accents. Voice quality has improved dramatically in recent updates — most outputs now sound natural enough for professional use without any additional processing.

You can also upload your own voiceover recordings and sync them automatically to your video timeline.

Platform-Specific Formatting

This is one of InVideo AI’s most practically useful features. The platform automatically formats your video for the specific platform you’re targeting.

- Instagram Reels and TikTok → 9:16 vertical format

- YouTube → 16:9 landscape format

- LinkedIn → Square 1:1 format

- YouTube Shorts → Optimized short-form vertical format

One video brief can be automatically reformatted for every platform simultaneously — a huge time-saver for social media teams managing multiple channels.

Workflow Automation with Brand Kits

Set up your brand kit once — your logo, brand colors, fonts, and tone of voice — and InVideo AI applies it automatically to every video you create. Maintaining brand consistency across high volumes of content has never been easier or faster.

AI-Powered Script Editor

Not happy with the AI-generated script? The built-in script editor lets you modify text directly — and the video updates automatically to reflect your changes. Adding a new sentence adds new footage. Removing a paragraph trims the video accordingly. It’s an intuitive and genuinely time-saving workflow.

Multilingual Video Creation

InVideo AI supports video creation in over 50 languages — including automatic translation of scripts and voiceovers. For brands targeting global audiences or running multilingual campaigns, this is an enormously valuable capability that would otherwise require significant additional investment.

Collaboration Tools

Teams can collaborate directly within InVideo AI — leaving comments, sharing drafts, and managing approval workflows without needing to export files and share them externally. For marketing teams and agencies managing multiple clients, this keeps the production process organized and efficient.

Where It Falls Short

InVideo AI is excellent at what it does — but it has clear limitations that are important to understand.

Output quality has a ceiling. InVideo AI relies primarily on stock footage rather than generating original AI video footage. The results look professional and polished — but they don’t have the cinematic originality of tools like Runway or Sora. If visual uniqueness is a priority, InVideo AI may feel limiting.

Stock footage can feel generic. With millions of clips available, finding truly distinctive visuals for niche topics can still be challenging. Some categories — particularly B2B, technical, or highly specific industries — have limited relevant stock options available.

Limited creative control over visuals. Because the AI selects and assembles footage automatically, you have less granular control over specific visual choices than you would with a manual editing tool. Power users may find this frustrating.

Voiceover quality is inconsistent across languages. While English voiceover quality is strong, quality in less common languages can be noticeably more robotic and less natural. For global campaigns, this may require additional voiceover work in certain markets.

Free plan is significantly limited. The free tier allows video creation but applies a watermark to all exports and restricts access to premium stock footage and advanced features. It’s useful for evaluation — but not for professional output.

Not suitable for original cinematic content. If your goal is creating original, visually distinctive video content that doesn’t look like it came from a stock footage library — InVideo AI is not the right tool. It excels at professional polish, not creative originality.

Pricing Breakdown

| Plan | Price | What You Get |

| Free | $0 | Watermarked exports, limited stock access, basic features |

| Business | $25/month | 50 AI generations/month, no watermark, full stock library access |

| Unlimited | $60/month | Unlimited generations, premium voices, team collaboration, priority support |

| Enterprise | Custom | Custom generations, dedicated account manager, API access, SSO |

Annual billing reduces these prices significantly — the Business plan drops to around $15/month on an annual subscription, making it one of the more affordable professional options in this category.

Real-World Use Cases

InVideo AI’s strengths make it particularly valuable in specific professional contexts:

Social media managers use InVideo AI to maintain a consistent, high-volume publishing schedule across multiple platforms without burning out their team or exploding their production budget.

Digital marketing agencies use it to rapidly produce client video content at scale — particularly for campaigns requiring multiple platform-specific versions of the same core message.

E-commerce brands use InVideo AI’s product showcase templates and stock library to create professional product videos for advertising campaigns without investing in expensive video production shoots.

YouTubers and content creators use the explainer video and script-to-video features to maintain consistent upload schedules — particularly for educational or informational content channels.

Small business owners use InVideo AI as an affordable alternative to hiring a video production company — creating professional marketing videos independently without needing any technical video editing skills.

News and media publishers use the article-to-video feature to repurpose written content into video format — extending the reach and lifespan of existing editorial content.

InVideo AI vs. Pictory — Which Should You Choose?

Both InVideo AI and Pictory are designed for content repurposing and marketing video creation. Here’s how they compare:

| InVideo AI | Pictory | |

| Primary Strength | AI-powered prompt-to-video creation | Transforming existing written content into video |

| Stock Library | 16 million+ clips | 3 million+ clips |

| AI Script Generation | Yes — from a simple prompt | Limited — works better with existing content |

| Platform Formatting | Automatic multi-platform formatting | Manual aspect ratio selection |

| Collaboration Tools | Yes — built-in team features | Limited |

| Starting Price | $25/month | $23/month |

| Best For | Creating new marketing videos from scratch | Repurposing blog posts and articles into video |

Choose InVideo AI if you’re primarily creating new video content from briefs, prompts, or scripts.

Choose Pictory if your primary use case is repurposing existing written content — blog posts, articles, reports — into video format.

Who Is InVideo AI Best For?

InVideo AI is the right choice if:

- You’re a social media manager or content creator who needs to produce high volumes of video content consistently

- You’re a marketer or agency managing multiple clients or campaigns simultaneously

- You need platform-specific formatting handled automatically without manual resizing and reformatting

- You want professional-looking results fast without investing time in learning complex video editing software

- You’re managing a brand and need consistent visual identity maintained across all video output

- You need multilingual video content for global audiences or international campaigns

It’s probably not the right choice if:

- You’re a filmmaker or creative professional prioritizing visual originality and cinematic quality

- You need to generate original AI footage rather than assembled stock footage

- You want granular creative control over every visual element of your video

- Your content requires highly specific or niche visuals that stock libraries don’t adequately cover

Best for AI Avatars and Corporate Videos — Synthesia & HeyGen

Platform: Web Starting Price: Synthesia — $29/month | HeyGen — $29/month Free Plan: Synthesia — No | HeyGen — Yes (limited) Best For: Businesses, corporate teams, L&D professionals, and marketers who need professional presenter-led videos without cameras, studios, or actors

Why We’re Covering These Two Together

Synthesia and HeyGen are the two dominant forces in AI avatar video creation. They serve overlapping audiences, offer similar core features, and are constantly pushing each other to improve.

Rather than reviewing them separately — and making you read twice as much — we’re covering them side by side. By the end of this section, you’ll know exactly which one is right for your specific use case.

Both tools solve the same fundamental problem: creating professional, presenter-led video content without ever needing a camera, a studio, or a human on screen.

That’s a genuinely transformative capability for businesses. Training videos, product demos, corporate communications, onboarding content, sales enablement materials — all of it can now be produced in hours rather than weeks.

What Is Synthesia?

Synthesia is the pioneer of AI avatar video creation. Founded in 2017, it has spent years building the most polished and enterprise-ready AI avatar platform available.

The core premise is simple. You write a script. You choose an AI avatar — a photorealistic digital presenter. You select a voice and language. Synthesia generates a professional video of your chosen avatar delivering your script naturally and convincingly.

No cameras. No studios. No scheduling actors. No expensive production crews.

In 2026, Synthesia offers over 230 diverse AI avatars across different ages, ethnicities, and presentation styles. Voice quality is exceptional. Avatar lip-sync accuracy is the best in the industry. And the platform’s enterprise features — brand kits, team collaboration, SCORM export for LMS integration — make it the default choice for large organizations.

What Is HeyGen?

HeyGen launched later than Synthesia but has grown explosively — and for good reason. It took everything Synthesia established and pushed it further in several key areas, particularly around personalization, interactivity, and video translation.

HeyGen’s standout innovation is its Video Translation feature — the ability to take any existing video and automatically dub it into a different language while preserving the original speaker’s voice, lip movements, and emotional delivery. For global businesses, this is an extraordinary capability.

HeyGen also introduced Live Avatar — a real-time interactive avatar that can hold live conversations, respond to questions, and engage audiences dynamically. This moves AI avatars from passive video presenters into something genuinely interactive and conversational.

First Impressions

Synthesia feels immediately professional and enterprise-grade. The interface is clean, organized, and clearly designed for teams and organizations. Setting up your first video is straightforward — pick an avatar, paste your script, choose your voice, and generate. The whole process takes minutes.

HeyGen feels slightly more dynamic and consumer-friendly. The dashboard is energetic and feature-rich. There’s more to explore — but also more to learn. Getting your first standard avatar video created is equally quick. But exploring HeyGen’s deeper features — video translation, live avatars, custom avatar creation — takes more time to fully understand.

Both platforms are genuinely accessible. Neither requires any technical video production knowledge to use effectively.

What We Tested

We put both platforms through an identical set of tests to ensure a fair comparison:

- Creating a standard corporate training video with a scripted presenter

- Generating a product explainer video with branded visuals and avatar presenter

- Creating a personalized sales outreach video with dynamic variable insertion

- Testing video translation from English into five different languages

- Building a custom AI avatar from a video recording

- Testing the interactive live avatar in a simulated customer service scenario

- Generating content in multiple languages from a single script

Standout Features — Synthesia

Industry-Leading Avatar Quality

Synthesia’s avatars are the most photorealistic and naturally moving in the industry. Lip-sync accuracy is exceptional. Facial expressions are natural. Body language feels authentic. When you watch a Synthesia video, the avatar genuinely looks and feels like a real person presenting — not a digital puppet reciting text.

230+ Diverse Avatar Library

With over 230 avatars available — spanning different ages, ethnicities, genders, and presentation styles — finding an avatar that fits your brand and audience is straightforward. Synthesia consistently expands this library with new additions.

Custom Avatar Creation

Enterprise users can create a custom AI avatar from a short video recording of a real person. This means companies can build a digital version of their CEO, trainer, or brand spokesperson — delivering consistent, on-brand video content at scale without scheduling recurring video shoots.

SCORM Export for LMS Integration

This is a feature unique to Synthesia among AI avatar tools — and it’s enormously valuable for Learning and Development teams. SCORM export allows Synthesia-generated training videos to be uploaded directly into Learning Management Systems like Moodle, Cornerstone, and SAP SuccessFactors — complete with interactive quizzes and completion tracking.

Brand Kit and Template System

Synthesia’s brand kit lets organizations lock in their visual identity — colors, logos, fonts, intro and outro sequences — and apply it automatically across all video content. For large organizations producing high volumes of training and communications content, this consistency is invaluable.

140+ Language Support

Synthesia supports video generation in over 140 languages — with natural-sounding voice synthesis across all of them. For multinational organizations producing training or communications content for global teams, this breadth of language support is unmatched.

Collaboration and Approval Workflows

Synthesia includes robust team collaboration features — shared workspaces, commenting, approval workflows, and role-based access controls. These enterprise-grade workflow tools make Synthesia the natural choice for large teams managing high volumes of video content production.

Standout Features — HeyGen

Video Translation with Voice and Lip Preservation

HeyGen’s most impressive and unique feature. Upload any video — in any language — and HeyGen will automatically translate it into your target language while preserving the original speaker’s voice tone, emotional delivery, and lip synchronization.

The results are genuinely remarkable. A CEO delivering a company address in English can be automatically transformed into a natural-sounding Spanish, French, Mandarin, or Japanese version — without re-recording, without hiring translators, and without losing the authenticity of the original delivery.

For global businesses, this capability alone justifies the cost of HeyGen.

Live Avatar — Real-Time Interactive AI Presenter

Live Avatar is HeyGen’s most forward-looking feature. Rather than generating a pre-recorded video, Live Avatar creates a real-time interactive AI presenter that can hold live conversations, answer questions dynamically, and engage audiences in ways that traditional video content simply cannot.

Use cases include live customer service interactions, interactive product demonstrations, personalized sales calls at scale, and dynamic training sessions where participants can ask questions and receive immediate responses.

Personalized Video at Scale

HeyGen’s Personalized Video feature allows you to create video templates with dynamic variables — name, company, specific product details — that are automatically populated from a data source.

The result is hundreds or thousands of individually personalized videos — each one addressing a specific recipient by name and referencing their specific context — generated automatically from a single template. For sales teams running outreach campaigns, this is transformative.

Custom Avatar from Photo

Unlike Synthesia’s custom avatar process — which requires a video recording — HeyGen can generate a custom AI avatar from a single photograph. While video-based custom avatars are more realistic, the photo-based option offers a faster and more accessible entry point for smaller businesses or individual creators.

Instant Avatar

HeyGen’s Instant Avatar feature creates a basic custom avatar from a short two-minute webcam recording. No professional video shoot required. No lighting setup. Just record yourself briefly on your laptop camera and HeyGen generates a usable AI avatar within minutes.

Talking Photo

Upload any photograph of a person — real or illustrated — and HeyGen’s Talking Photo feature animates it to deliver your chosen script. The animated photo moves its mouth, blinks naturally, and delivers your voiceover convincingly. For social media content, personalized outreach, or creative projects, this is a fun and practical tool.

Where They Fall Short

Synthesia Limitations

No free plan. Synthesia requires a paid subscription from day one — there’s no way to try the platform without committing financially. For individuals or small businesses evaluating options, this is a real barrier.

Avatar expressions can feel stiff. Despite impressive lip-sync quality, Synthesia’s avatars occasionally feel slightly robotic in their overall body language and emotional expressiveness. They’re convincing — but not always fully natural.

Limited creative flexibility. Synthesia is built for professional, structured corporate video content. If you need creative, stylized, or visually distinctive video output, it feels constraining.

Pricing scales steeply for enterprises. While entry-level pricing is reasonable, enterprise-tier pricing — particularly for custom avatars and advanced collaboration features — can become significant for large organizations.

HeyGen Limitations

Free plan is very restrictive. HeyGen offers a free tier — but it’s extremely limited in terms of video length, resolution, and number of generations. It’s only useful for basic evaluation purposes.

Video translation accuracy varies. While the video translation feature is genuinely impressive, accuracy in less common language pairs can be inconsistent. Always review translated output carefully before publishing.

Live Avatar requires strong internet connection. Real-time avatar interactions are demanding on bandwidth. Users with slower internet connections may experience latency or quality degradation during live sessions.

Custom avatar quality varies by input. The quality of HeyGen’s custom avatars is highly dependent on the quality of the input recording or photograph. Poor lighting or low-resolution source material produces noticeably lower-quality avatar output.

Pricing Breakdown

Synthesia Pricing

| Plan | Price | What You Get |

| Starter | $29/month | 10 videos/month, 90+ avatars, 140+ languages |

| Creator | $89/month | Unlimited videos, 230+ avatars, custom avatar, brand kit |

| Enterprise | Custom | Advanced collaboration, SCORM export, SSO, dedicated support |

HeyGen Pricing

| Plan | Price | What You Get |

| Free | $0 | 1 video/month, watermarked, limited features |

| Creator | $29/month | 15 videos/month, all avatars, video translation |

| Business | $89/month | Unlimited videos, live avatar, personalized video, team features |

| Enterprise | Custom | Custom avatars, API access, SSO, dedicated support |

Synthesia vs. HeyGen — Direct Comparison

| Feature | Synthesia | HeyGen |

| Avatar Quality | ⭐⭐⭐⭐⭐ Industry leading | ⭐⭐⭐⭐ Excellent |

| Avatar Library | 230+ avatars | 100+ avatars |

| Video Translation | Basic | ⭐⭐⭐⭐⭐ Industry leading |

| Live Interactive Avatar | No | Yes |

| Personalized Video at Scale | Limited | ⭐⭐⭐⭐⭐ Excellent |

| LMS/SCORM Integration | Yes | No |

| Custom Avatar Creation | Video-based | Photo or video-based |

| Free Plan | No | Yes (very limited) |

| Best For | Enterprise L&D and corporate comms | Sales, marketing, and global content |

| Starting Price | $29/month | $29/month |

Real-World Use Cases

Where Synthesia Excels

Corporate training and L&D teams use Synthesia to produce multilingual training content at a fraction of traditional video production costs — with SCORM export making LMS integration seamless.

HR departments use Synthesia for onboarding videos, policy updates, and internal communications — maintaining a consistent, professional presenter voice across all content.

Global enterprises use Synthesia’s 140+ language support to localize training and communications content for international teams without running separate production processes for each market.

Where HeyGen Excels

Sales teams use HeyGen’s personalized video feature to send individually tailored outreach videos — dramatically improving response rates compared to standard email outreach.

Global marketing teams use HeyGen’s video translation feature to localize existing video content for international markets — preserving the authenticity of the original delivery without re-recording.

Customer service teams use HeyGen’s Live Avatar for interactive customer support experiences — handling common queries dynamically without requiring human agents for every interaction.

Who Should Choose Synthesia?

Synthesia is the right choice if:

- You’re a large enterprise or organization with formal video production workflows

- Your primary use case is corporate training, L&D, or internal communications

- You need SCORM export for Learning Management System integration

- You need the highest quality avatar output available

- You’re producing content in many different languages for global teams

- Brand consistency across high volumes of content is a priority

Who Should Choose HeyGen?

HeyGen is the right choice if:

- You’re in sales or marketing and need personalized video at scale

- Your primary use case involves translating existing video content into other languages

- You want to experiment with real-time interactive AI avatars

- You need a free plan to evaluate before committing financially

- You’re a smaller business or individual creator who needs flexibility and accessibility

- You want to create custom avatars quickly without a formal video shoot

Best for Editing Video by Editing the Script — Descript

Platform: Web, Windows, Mac Starting Price: $24/month Free Plan: Yes Best For: Podcasters, video creators, educators, and content teams who want to edit video as easily and intuitively as editing a text document

What Is Descript?

Most video editors work the same way. You scrub through a timeline. You find the section you want to cut. You trim it. You repeat this process hundreds of times until your video is finished.

It works — but it’s slow, technical, and exhausting.

Descript threw out that entire paradigm and replaced it with something radically simpler: edit your video by editing its transcript.

Here’s how it works. You upload your video or audio file. Descript automatically transcribes every word spoken. That transcription becomes your editing interface. Delete a sentence from the transcript — and the corresponding section disappears from your video automatically. Move a paragraph — and the video footage moves with it. Find a filler word like “um” or “you know” — and remove every instance across your entire video in a single click.

It sounds almost too simple. But in practice, it is genuinely transformative — particularly for anyone who works regularly with talking-head videos, interviews, podcasts, tutorials, or any content that is primarily driven by spoken dialogue.

In 2026, Descript has evolved well beyond its core transcript-based editing into a comprehensive AI-powered content production platform. But that central innovation — editing video by editing text — remains its most powerful and distinctive capability.

First Impressions

Opening Descript for the first time, the interface feels immediately familiar. It looks less like traditional video editing software and more like a document editor — Google Docs meets a video timeline.

There’s a transcript panel on one side. A video preview on the other. A simplified timeline at the bottom. Everything is clean, uncluttered, and logically organized.

We uploaded a 25-minute interview recording and had a complete, clean transcript ready within three minutes. Transcription accuracy was excellent — capturing technical terms, different speakers, and varied accents with impressive precision.

From that point, editing felt genuinely effortless. Selecting and deleting unwanted sections in the transcript, removing filler words, reordering content — all of it happened in a fraction of the time it would take using traditional timeline-based editing software.

For anyone who has spent hours scrubbing through video timelines looking for a specific moment — Descript’s approach feels like a revelation.

What We Tested

We designed our Descript tests around realistic content production scenarios:

- Editing a 30-minute podcast interview down to a tight 18-minute episode

- Removing all filler words and false starts from a 15-minute tutorial video

- Correcting a spoken mistake in a recorded presentation without re-recording

- Creating highlight clips from a long-form interview for social media

- Generating captions and subtitles for a YouTube video

- Using AI voice cloning to fix a recording error without re-recording the section

- Repurposing a long-form video into multiple shorter clips for different platforms

- Collaborating with a team member on the same project simultaneously

Descript performed exceptionally well across every one of these scenarios — particularly the filler word removal and AI voice correction tests, where it saved enormous amounts of time compared to traditional editing approaches.

Standout Features

Transcript-Based Editing

The core innovation and still the most powerful thing Descript does. Your video transcript is your edit decision list. Everything you do to the text — deleting, moving, cutting — is instantly reflected in the video.

For dialogue-driven content, this completely eliminates the need to scrub through timelines looking for specific moments. Find the word in the transcript. Make your edit. Done.

The time savings are significant. Editors who switch to Descript consistently report cutting their editing time by 40 to 60 percent for interview and dialogue-based content.

Overdub — AI Voice Cloning

This is one of Descript’s most remarkable features. Overdub allows you to create an AI clone of your own voice — trained on your actual recordings — and use it to fix mistakes, add new sentences, or correct mispronunciations without ever re-recording.

Made a factual error in your video? Mispronounced a word? Forgot to include an important point? Simply type the correction into your transcript and Descript’s AI generates the missing audio in your own voice — seamlessly inserted into your video.

The accuracy of Overdub’s voice cloning is genuinely impressive. In our tests, listeners could not reliably identify which sections had been AI-generated versus recorded naturally. For tutorial creators, educators, and course producers — this feature alone saves hours of re-recording time.

Filler Word Removal

One click. Every “um,” “uh,” “you know,” “like,” and “so” in your entire video — identified and removed automatically.

Descript scans your transcript, highlights every filler word, and lets you remove all instances simultaneously. You can review each one individually before removing — or trust the AI and clear them all at once.

For content creators who speak naturally and conversationally on camera, this single feature can save 30 minutes or more of tedious manual editing on a typical video.

Underlord — AI Editing Suite

Descript’s Underlord is an integrated suite of AI editing tools accessible directly within the platform. Key Underlord capabilities include:

- Remove Silence — automatically detects and removes awkward pauses and dead air

- Eye Contact Correction — subtly adjusts your gaze in recorded footage to maintain direct eye contact with the camera even when you were looking at notes or a script

- Studio Sound — removes background noise, echo, and room sound from your audio automatically

- Green Screen — automatically detects and removes backgrounds without physical green screen equipment

- Clip Creation — automatically identifies the most engaging moments from long-form content and suggests highlight clips for social media

Screen Recording with Automatic Transcription

Descript includes built-in screen recording — and everything you record is automatically transcribed and ready to edit immediately. For tutorial creators, product demo makers, and software trainers, this seamless record-then-edit workflow is enormously efficient.

Multitrack Timeline for Advanced Editing

While Descript’s transcript-based editing handles most tasks, the platform also includes a full multitrack timeline for users who need more granular control. Add B-roll footage, music tracks, sound effects, transitions, and graphics — all within the same interface.

This means Descript functions as a complete video editor — not just a transcript tool. You don’t need to export your project to another platform for finishing and polishing.

Automatic Captions and Subtitles

Descript generates accurate, styled captions automatically from your transcript. Captions can be customized — font, size, color, position, animation style — and exported as burned-in subtitles or as separate caption files compatible with YouTube, Vimeo, and other platforms.

For creators who publish regularly to social media — where a significant percentage of viewers watch without sound — automatic caption generation is an enormous time-saver.

Remote Recording with Riverside-Quality Audio

Descript includes Squadcast — an integrated remote recording tool that captures separate, high-quality audio and video tracks from each participant in a remote interview or podcast conversation.

This means you can schedule, record, transcribe, and edit a remote interview — all within a single platform — without needing separate tools for recording and editing.

Publishing and Distribution

Once your video is edited, Descript lets you publish directly to YouTube, export for other platforms, or generate a shareable link for client review — all without leaving the platform.

Where It Falls Short

Descript is exceptional for its core use case — but it has real limitations worth understanding.

Not ideal for footage-heavy content. Descript’s transcript-based approach works brilliantly for dialogue-driven content. But for videos that rely heavily on B-roll, visual storytelling, or complex multi-camera footage — traditional editing software like Premiere Pro or Final Cut Pro still offers more power and flexibility.

Overdub voice quality has limits. While Overdub is impressive, it works best for short corrections and additions. Generating large amounts of AI voice content — entire new sections of narration — can sometimes sound slightly synthetic when listened to carefully.

Video effects and motion graphics are limited. Descript is not a full-featured motion graphics tool. If your videos require complex animations, lower thirds, dynamic title sequences, or advanced visual effects — you’ll need to supplement Descript with a dedicated motion graphics tool.

Transcription accuracy drops with accents. While transcription accuracy is excellent for standard American and British English accents, accuracy can drop noticeably for strong regional accents or non-native English speakers. This can mean additional time spent correcting transcription errors before editing.

Export options can be limiting. Some advanced export formats and settings available in professional editing software are not available in Descript. For broadcasters or filmmakers with specific technical delivery requirements, this may be a constraint.

Collaboration features need improvement. While team collaboration is supported, simultaneous real-time co-editing — similar to Google Docs — is still not fully implemented. Larger teams may encounter workflow friction when multiple editors need to work on the same project simultaneously.

Pricing jumps significantly between tiers. The gap between the free plan and the Creator plan — and between Creator and Business — is significant. Users who outgrow the free plan quickly may find the step up in cost more than they anticipated.

Pricing Breakdown

| Plan | Price | What You Get |

| Free | $0 | 1 hour transcription/month, watermarked exports, basic editing |

| Hobbyist | $24/month | 10 hours transcription/month, Overdub, no watermark, captions |

| Creator | $40/month | 30 hours transcription/month, full Underlord suite, screen recording |

| Business | $75/month | Unlimited transcription, team features, advanced collaboration, API |

| Enterprise | Custom | Custom transcription, SSO, dedicated support, advanced security |

Annual billing reduces these prices by approximately 25 percent across all plans — making the Hobbyist plan available at around $18/month on an annual subscription.

Real-World Use Cases

Descript’s unique approach makes it particularly valuable in specific content production contexts:

Podcasters use Descript as their primary production tool — recording remotely with Squadcast, editing by transcript, removing filler words automatically, and publishing directly to podcast platforms — all within a single workflow.

YouTubers and video educators use Descript’s screen recording, transcript editing, and automatic caption generation to produce tutorial and educational content significantly faster than traditional editing workflows allow.

Corporate communications teams use Descript to produce internal video updates, town hall recordings, and training content — using Overdub to correct errors without scheduling re-recording sessions.

Journalists and documentary makers use Descript’s transcript-based approach to navigate and edit long interview recordings — finding specific quotes and moments by searching the transcript rather than scrubbing through timelines.

Course creators and online educators use Descript’s combination of screen recording, Overdub, and automatic captions to produce polished online course content efficiently — maintaining high production quality without expensive editing support.

Marketing and content agencies use Descript to rapidly turn long-form video content — webinars, interviews, presentations — into multiple shorter clips, highlight reels, and social media assets.

Descript vs. Traditional Video Editors — Is It Worth Switching?

This is the most common question from creators evaluating Descript. Here’s an honest answer:

Switch to Descript if:

- The majority of your video content is dialogue-driven — interviews, tutorials, presentations, podcasts

- You spend significant time removing filler words, silences, and unwanted sections

- You regularly need to fix spoken mistakes without re-recording

- You want to produce captions and social clips as part of your standard workflow

- You’re a solo creator or small team without dedicated technical video editors

Stick with traditional editors if:

- Your content relies heavily on complex visual storytelling, B-roll, or multi-camera editing

- You need advanced motion graphics, color grading, or visual effects

- You have specific technical export requirements for broadcast or professional delivery

- You’re working with footage types or formats that Descript’s import doesn’t fully support

Many professional creators use both — Descript for the rough cut and transcript-based editing, then a traditional editor for final polish and visual finishing. This hybrid workflow captures the speed benefits of Descript’s approach while retaining the full power of professional editing software for the final output.

Who Is Descript Best For?

Descript is the right choice if:

- You’re a podcaster who needs an end-to-end audio and video production workflow in a single platform

- You’re a YouTuber or educator producing regular tutorial, interview, or talking-head content

- You’re a content team or agency repurposing long-form video into multiple shorter assets

- You make frequent spoken errors in recordings and want to fix them without re-recording

- You want automatic captions generated accurately and quickly as part of your standard workflow

- You’re new to video editing and find traditional timeline-based editing software intimidating

It’s probably not the right choice if:

- Your content requires complex visual effects, motion graphics, or advanced color grading

- You work primarily with footage-heavy content rather than dialogue-driven video

- You need broadcast-grade technical export specifications

- You’re an experienced professional editor who is fully fluent in traditional editing software and workflows

Best for Extracting Viral Clips from Long-Form Content — OpusClip

Platform: Web, iOS Starting Price: $19/month Free Plan: Yes (limited) Best For: Content creators, podcasters, marketers, and media teams who want to automatically extract the most engaging moments from long-form video and repurpose them into viral short-form clips

What Is OpusClip?

Every long-form video contains hidden gold.

That two-hour podcast episode has a three-minute moment that would stop someone mid-scroll on TikTok. That 45-minute webinar contains a 60-second insight that would perform brilliantly as a LinkedIn clip. That hour-long YouTube video has five or six moments powerful enough to stand alone as Reels or Shorts.

The problem has always been finding those moments — and then doing all the work required to turn them into polished, platform-ready clips. Trimming. Captioning. Reformatting. Adding graphics. It’s time-consuming, repetitive work that most creators simply don’t have the bandwidth to do consistently.

OpusClip solves this problem entirely.

It uses AI to watch your long-form video, identify the most engaging and shareable moments, extract them automatically, reformat them for short-form platforms, add animated captions, and deliver a batch of ready-to-publish clips — all without you manually reviewing a single second of footage.

In 2026, OpusClip has become the default tool for content repurposing among serious creators and media teams. Its AI has gotten significantly smarter — not just finding moments that are visually engaging, but understanding narrative hooks, emotional peaks, and shareability signals that make content perform well on social media.

First Impressions

OpusClip’s interface is clean, modern, and immediately purposeful. There’s no complex dashboard to navigate. The workflow is deliberately simple:

Upload your video — or paste a YouTube, Zoom, or podcast URL. Choose your target platforms and clip length preferences. Hit process. Wait.

That’s genuinely it.

We tested with a 90-minute podcast episode. Within 12 minutes, OpusClip had processed the entire video and delivered 14 individual clips — each trimmed, captioned, formatted for vertical video, and scored by the AI for estimated virality potential.