Artificial intelligence is changing how we live, work, and make decisions. From smart assistants to automated systems, AI is everywhere. But with this rapid growth comes serious questions.

Can AI be trusted? Is it fair to everyone? Who is responsible when it makes mistakes?

AI ethics focuses on answering these questions. It ensures that technology is used in a way that is safe, fair, and beneficial for society. Without ethical guidelines, AI can create bias, invade privacy, and even cause harm.

Understanding AI ethics is no longer optional—it’s essential. In this article, you’ll explore what AI ethics means, why it matters, and how we can build a more responsible AI-driven future.

Introduction to AI Ethics

- Brief overview of artificial intelligence

- Why ethics is a critical concern today

What Is AI Ethics?

- Definition and scope

- Key concepts in ethical AI

Importance of AI Ethics

- Impact on society, businesses, and individuals

- Trust, fairness, and accountability

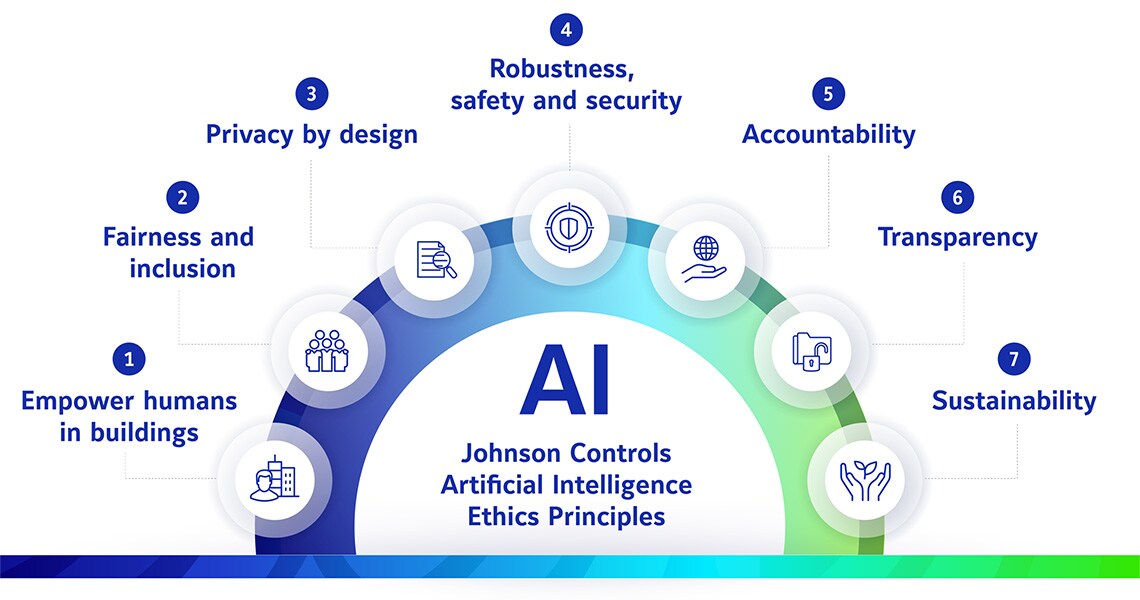

Core Principles of Ethical AI

- Fairness and non-discrimination

- Transparency and explainability

- Privacy and security

- Accountability

Major Ethical Challenges in AI

- Bias and discrimination

- Privacy concerns

- Job displacement

- Misinformation and misuse

Global Governance and Ethical Frameworks

- International efforts and policies

- Role of organizations and governments

Real-World Ethical Dilemmas in AI

- Practical examples across industries

- AI in media, healthcare, and business

Strategies for Building Ethical AI

- Responsible development practices

- Inclusive data and teams

- Monitoring and regulation

Future of AI Ethics

- Emerging trends

- Role of innovation and human rights

Introduction to AI Ethics

Artificial Intelligence (AI) refers to machines and systems designed to perform tasks that normally require human intelligence. These tasks include learning, problem-solving, decision-making, and even understanding language. Today, AI is used in everyday applications like search engines, recommendation systems, healthcare tools, and financial services.

As AI becomes more powerful and widespread, its influence on society continues to grow. It can improve efficiency, reduce human effort, and open new opportunities across industries. However, this rapid advancement also raises important concerns about how AI systems are designed and used.

For example, what happens if an AI system makes a biased decision? How is personal data being collected and protected? Can machines make decisions that impact human lives without proper oversight? These are not just technical questions—they are ethical ones.

This is where AI ethics comes into play. AI ethics is the study of moral principles and guidelines that help ensure AI technologies are developed and used responsibly. It focuses on making AI systems fair, transparent, secure, and aligned with human values.

Understanding the basics of AI ethics is the first step toward building trust in AI systems. As individuals, businesses, and governments rely more on AI, it becomes essential to ensure that these technologies benefit everyone without causing harm.

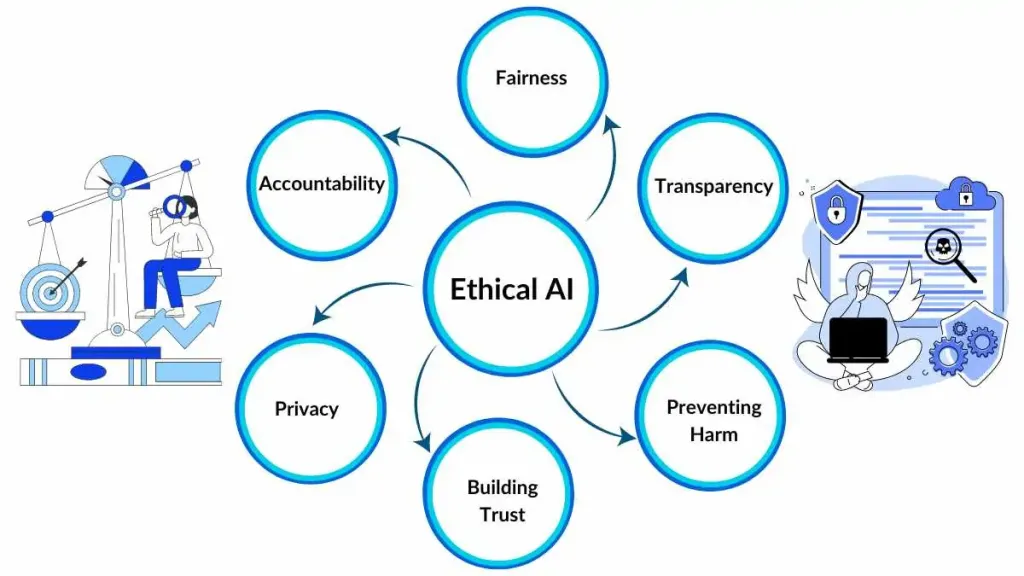

What Is AI Ethics?

AI ethics is a set of principles and guidelines that govern how artificial intelligence systems are designed, developed, and used. It focuses on ensuring that AI technologies operate in a way that is fair, transparent, and aligned with human values.

At its core, AI ethics is about making responsible choices. It asks whether AI systems treat people equally, respect privacy, and make decisions that can be trusted. Since AI can influence important areas like healthcare, finance, education, and hiring, ethical considerations are critical at every stage of development.

One key aspect of AI ethics is fairness. AI systems should not discriminate against individuals or groups based on race, gender, or other characteristics. However, since AI learns from data, it can sometimes inherit biases present in that data. Ethical AI aims to identify and reduce such biases.

Another important concept is transparency. Many AI systems operate like “black boxes,” meaning their decision-making process is not easily understood. AI ethics promotes explainability, so users can understand how and why decisions are made.

Privacy is also a major concern. AI often relies on large amounts of personal data. Ethical AI ensures that this data is collected, stored, and used responsibly, with proper consent and protection.

Finally, accountability plays a crucial role. When an AI system makes a mistake or causes harm, it must be clear who is responsible. Developers, organizations, and stakeholders must take ownership of how AI systems behave.

In simple terms, AI ethics ensures that technology serves humanity in a safe and responsible way. It bridges the gap between innovation and responsibility, helping create AI systems that people can trust.

Importance of AI Ethics

AI ethics is important because artificial intelligence is no longer limited to research labs—it directly affects people’s daily lives. From loan approvals to medical diagnoses, AI systems are making decisions that can shape opportunities, outcomes, and even futures.

One major reason AI ethics matters is trust. People are more likely to use and accept AI technologies when they believe those systems are fair and reliable. If AI produces biased or harmful results, public trust quickly breaks down, which can slow innovation and adoption.

Another key factor is fairness. Without ethical guidelines, AI systems can unintentionally discriminate. For example, biased data can lead to unfair hiring decisions or unequal access to services. AI ethics ensures that systems are designed to treat everyone equally and avoid reinforcing social inequalities.

Accountability is also essential. When AI systems make mistakes, there must be clear responsibility. Ethical frameworks help organizations define who is answerable for errors and ensure that proper actions are taken to prevent future issues.

AI ethics also protects privacy and security. Many AI applications rely on large amounts of personal data. Without proper safeguards, this data can be misused or exposed. Ethical practices ensure that user information is handled with care and respect.

For businesses, AI ethics is not just a moral responsibility—it’s a strategic advantage. Companies that prioritize ethical AI build stronger relationships with customers, avoid legal risks, and maintain a positive reputation in the market.

Finally, AI ethics helps ensure that technology benefits society as a whole. It encourages the development of AI systems that solve real problems while minimizing harm. As AI continues to evolve, ethical considerations will play a crucial role in shaping a future that is both innovative and responsible.

Core Principles of Ethical AI

To ensure that artificial intelligence is used responsibly, several core principles guide the development and deployment of AI systems. These principles help create technology that is not only powerful but also safe, fair, and trustworthy.

Fairness and Non-Discrimination

AI systems should treat all individuals equally. They must avoid bias based on factors like race, gender, age, or background. Since AI learns from data, biased datasets can lead to unfair outcomes. Ethical AI requires careful data selection and continuous testing to reduce discrimination.

Transparency and Explainability

AI should not operate as a mystery. Users and stakeholders should be able to understand how decisions are made. Transparency builds trust and allows people to question or challenge outcomes when necessary. Explainable AI models make it easier to identify errors and improve performance.

Privacy and Data Protection

AI relies heavily on data, often including personal and sensitive information. Ethical AI ensures that this data is collected with consent, stored securely, and used responsibly. Protecting user privacy is essential to prevent misuse and maintain confidence in AI systems.

Accountability and Responsibility

There must always be human oversight in AI systems. If something goes wrong, it should be clear who is responsible. Developers, organizations, and stakeholders must take ownership of AI outcomes and ensure systems are regularly monitored and improved.

Safety and Reliability

AI systems should perform consistently and safely under different conditions. They must be tested thoroughly to prevent errors that could cause harm. Reliable AI systems reduce risks and ensure dependable results in critical areas like healthcare and transportation.

Human-Centered Design

AI should be designed to benefit people, not replace or harm them. Ethical AI focuses on enhancing human capabilities and supporting better decision-making. It ensures that technology aligns with human values, needs, and well-being.

By following these principles, organizations can develop AI systems that are not only innovative but also responsible. These guidelines act as a foundation for building trust and ensuring that AI serves society in a positive and meaningful way.

Major Ethical Challenges in AI

Despite its benefits, artificial intelligence brings several ethical challenges that cannot be ignored. These issues highlight the risks of using AI without proper guidelines and control. Understanding these challenges is essential for building more responsible systems.

Bias and Discrimination

One of the most common problems in AI is bias. Since AI systems learn from existing data, they can reflect and even amplify human prejudices. This can lead to unfair outcomes in areas like hiring, lending, and law enforcement. Addressing bias requires better data practices and continuous monitoring.

Privacy Concerns

AI systems often rely on large amounts of personal data. This raises concerns about how data is collected, stored, and used. Without proper safeguards, sensitive information can be exposed or misused. Protecting user privacy is a major ethical responsibility for organizations using AI.

Job Displacement

Automation powered by AI is changing the job market. While it creates new opportunities, it can also replace certain roles, especially repetitive or manual jobs. This shift can lead to unemployment and economic inequality if not managed properly. Ethical AI must consider the social impact on workers.

Misinformation and Manipulation

AI can be used to create and spread false information, such as deepfakes or automated fake news. This can influence public opinion, damage reputations, and even affect elections. Controlling the misuse of AI in media and communication is a growing challenge.

Lack of Transparency (“Black Box” Problem)

Many AI systems operate in ways that are difficult to understand. This lack of transparency makes it hard to explain decisions or identify errors. When people cannot see how outcomes are produced, it reduces trust and accountability.

Security and Misuse

AI technologies can be misused for harmful purposes, such as cyberattacks, surveillance, or autonomous weapons. Weak security systems can also be exploited by attackers. Ensuring that AI is used safely and ethically is critical to preventing damage.

Environmental Impact

Training large AI models requires significant computational power, which consumes energy and contributes to carbon emissions. As AI adoption grows, its environmental footprint becomes an important ethical concern.

Global Governance and Ethical Frameworks

As artificial intelligence continues to expand across borders, the need for global governance has become more important than ever. AI is not limited to one country or industry, so ethical standards must be shared and coordinated internationally.

Governments and global organizations are working to create frameworks and policies that guide the responsible use of AI. These frameworks set rules for how AI should be developed, tested, and deployed. They also help ensure that AI systems respect human rights, promote fairness, and avoid harm.

One key goal of global governance is standardization. Without common guidelines, different countries may follow different rules, leading to confusion and potential misuse. International standards help create consistency, making it easier for organizations to build ethical AI systems that can be trusted worldwide.

Another important aspect is regulation and compliance. Governments are introducing laws and policies to control how AI is used, especially in sensitive areas like healthcare, finance, and security. These regulations ensure that companies are held accountable and that users are protected.

Global frameworks also emphasize collaboration. Ethical AI is not just the responsibility of governments—it involves businesses, researchers, and civil society. By working together, these stakeholders can share knowledge, address risks, and develop better solutions.

In addition, many frameworks focus on a human rights approach. This means AI systems should respect fundamental rights such as privacy, equality, and freedom of expression. Protecting these rights ensures that technology benefits people without exploiting or harming them.

Overall, global governance and ethical frameworks provide a foundation for responsible AI development. They help balance innovation with accountability, ensuring that AI continues to grow in a way that is safe, fair, and beneficial for everyone.

Real-World Ethical Dilemmas in AI

AI ethics is not just a theoretical concept—it plays out in real-world situations where decisions can have serious consequences. These ethical dilemmas often arise when AI systems must choose between competing values, such as efficiency vs fairness or innovation vs privacy.

One common example is in healthcare. AI systems are used to assist in diagnosing diseases and recommending treatments. But what if an AI makes an incorrect diagnosis? Who is responsible—the developer, the hospital, or the doctor? There is also the risk of biased medical data, which can lead to unequal treatment for different groups of patients.

In the hiring process, many companies use AI tools to screen resumes and select candidates. While this can save time, it can also reinforce bias if the training data reflects past discrimination. This raises concerns about fairness and equal opportunity in employment.

Another major dilemma appears in autonomous vehicles. Self-driving cars must make split-second decisions in critical situations. For example, how should a car react in an unavoidable accident? Should it prioritize the safety of passengers or pedestrians? These decisions involve complex moral judgments that are difficult to program into machines.

AI also plays a big role in social media and content recommendation. Algorithms decide what content users see, which can influence opinions and behavior. This creates ethical concerns around misinformation, echo chambers, and manipulation of public opinion.

In the field of surveillance and security, AI-powered facial recognition systems are used by governments and organizations. While they can improve safety, they also raise serious privacy concerns and the risk of misuse, especially if used without consent or proper regulation.

These real-world dilemmas show that AI decisions are not always clear-cut. They often involve trade-offs that impact individuals and society. Addressing these challenges requires careful design, strong ethical guidelines, and human oversight to ensure that AI systems act in ways that align with societal values.

Strategies for Building Ethical AI

Creating ethical AI is not a one-time task—it requires continuous effort throughout the entire lifecycle of an AI system. Organizations must adopt clear strategies to ensure that their AI solutions are responsible, fair, and trustworthy.

Define Clear Ethical Standards

The first step is to establish strong ethical guidelines. Organizations should define what responsible AI means for them, based on values like fairness, transparency, and accountability. These standards act as a foundation for all AI-related decisions.

Use High-Quality and Diverse Data

Since AI systems learn from data, the quality of that data is critical. Using diverse and well-balanced datasets helps reduce bias and ensures more accurate outcomes. Regular data audits can identify and correct potential issues early.

Build Inclusive and Skilled Teams

Diverse teams bring different perspectives, which helps in identifying ethical risks that might otherwise be overlooked. Including experts from various fields—such as technology, ethics, and law—leads to more balanced and responsible AI development.

Ensure Transparency and Explainability

AI systems should be designed in a way that their decisions can be understood. Providing clear explanations builds trust and allows users to question or verify outcomes. This is especially important in high-stakes areas like healthcare and finance.

Implement Regular Monitoring and Audits

AI systems should not be left unchecked after deployment. Continuous monitoring helps detect errors, bias, or unexpected behavior. Regular audits ensure that systems remain aligned with ethical standards over time.

Establish Accountability Mechanisms

Organizations must clearly define who is responsible for AI decisions. Having accountability structures ensures that issues are addressed quickly and responsibly, reducing risks and improving system reliability.

Engage Stakeholders and the Public

Ethical AI development should involve input from different stakeholders, including users, policymakers, and communities. This helps ensure that AI systems reflect real-world needs and values.

Stay Updated and Adapt

AI is a rapidly evolving field. Organizations must stay informed about new risks, technologies, and regulations. Adapting to changes ensures that ethical practices remain effective in the long term.

Future of AI Ethics

The future of AI ethics is closely tied to the rapid growth of artificial intelligence technologies. As AI continues to evolve, ethical considerations will become even more critical to ensure that these systems benefit society while minimizing risks.

Emerging Ethical Challenges

New AI technologies, such as generative AI and advanced autonomous systems, are creating novel ethical questions. For example, deepfake content, AI-driven surveillance, and autonomous decision-making raise concerns about privacy, consent, and accountability. Staying ahead of these challenges requires proactive ethical planning and regulation.

Integration with Policy and Regulation

Governments and international organizations are increasingly developing laws and frameworks for ethical AI. In the future, compliance with ethical standards will likely be mandatory for AI deployment. This will help ensure fairness, transparency, and safety across industries and borders.

Focus on Human-Centered AI

The future of AI ethics emphasizes designing systems that enhance human capabilities rather than replace them. Human-centered AI prioritizes user well-being, inclusivity, and respect for fundamental rights, ensuring that technology supports society positively.

Collaboration Across Stakeholders

Ethical AI will require close cooperation between developers, policymakers, researchers, and civil society. Collaboration helps identify risks, share best practices, and create global standards. This collective effort ensures AI serves humanity responsibly.

Sustainability and Social Responsibility

Future AI systems will also need to address environmental and social impacts. Ethical considerations will include energy consumption, equitable access to AI benefits, and minimizing harm to vulnerable populations.

Continuous Adaptation and Learning

AI is a rapidly changing field. Ethical frameworks will need to evolve alongside technological advancements. Organizations must remain vigilant, continuously updating practices to address emerging risks and ensure responsible innovation.

Frequently Asked Questions (FAQs) on AI Ethics

Understanding AI ethics can seem complex, but many common questions help clarify its purpose and application. Here are answers to the most frequently asked questions about AI ethics.

1. How can I use AI ethically?

Using AI ethically means designing and deploying AI systems in ways that prioritize fairness, transparency, accountability, and privacy. It involves monitoring outputs, minimizing bias, and ensuring AI decisions do not harm individuals or communities.

2. Why is studying AI important for the future?

AI is increasingly shaping industries, jobs, and daily life. Studying AI helps individuals and organizations understand its potential benefits and risks, equipping them to make informed decisions and create systems that are responsible and sustainable.

3. What is the role of transparency in AI ethics?

Transparency ensures that AI systems are understandable and explainable. When people can see how decisions are made, it builds trust and allows for accountability, especially in critical areas like healthcare, finance, and law enforcement.

4. How can AI bias be addressed?

AI bias can be reduced through diverse and representative datasets, regular testing, and auditing of AI systems. Including diverse perspectives in AI development teams also helps identify and correct potential biases before deployment.

5. What is the future of AI ethics?

The future of AI ethics involves proactive regulation, global collaboration, and human-centered design. Ethical practices will continue to evolve alongside technology, focusing on safety, fairness, and societal well-being.

6. Are there any international standards for AI ethics?

Yes, several international organizations, such as the OECD and UNESCO, are developing ethical guidelines and frameworks for AI. These standards promote fairness, accountability, privacy, and human rights globally.

7. How can individuals learn more about AI ethics?

Individuals can explore AI ethics through online courses, research papers, workshops, and webinars. Engaging with professional organizations and communities focused on responsible AI is also valuable.

8. What are the consequences of ignoring AI ethics?

Ignoring AI ethics can lead to biased decisions, privacy violations, legal penalties, loss of trust, and negative social impact. Organizations may face reputational damage, financial loss, and regulatory action if AI systems are misused or harmful.

Take Action: Implementing Ethical AI

Understanding AI ethics is crucial, but real impact comes from action. Organizations and individuals must actively implement ethical principles to ensure AI serves people responsibly and safely.

Start with Governance and Policies

Create clear internal guidelines for AI development and deployment. Define responsibilities, ethical standards, and decision-making processes. Governance ensures that ethical considerations are part of every stage, from design to deployment.

Integrate Ethics into the Development Process

Ethical principles should be embedded directly into AI systems. This includes selecting unbiased datasets, designing explainable algorithms, and testing models for fairness and safety. Making ethics part of the workflow prevents problems before they occur.

Conduct Regular Audits

AI systems must be monitored continuously. Regular audits help detect bias, errors, or unintended consequences. Monitoring also ensures compliance with internal standards and external regulations, keeping AI accountable.

Engage Stakeholders

Include input from users, employees, regulators, and communities. Stakeholder engagement ensures AI systems meet real-world needs and reflect societal values. Feedback loops help improve AI continuously and maintain public trust.

Educate and Train Teams

Employees working with AI should understand ethical considerations. Training programs and workshops can build awareness of bias, privacy concerns, and responsible AI practices. A knowledgeable team is essential for ethical decision-making.

Adopt Human-Centered AI Practices

Prioritize systems that enhance human well-being. Ethical AI should augment human decision-making, respect rights, and minimize harm. Technology should empower people, not replace or disadvantage them.

Stay Informed and Adaptable

AI technology and ethical standards evolve quickly. Organizations must stay up-to-date with regulations, best practices, and emerging risks. Flexibility and adaptation are critical to maintaining responsible AI over time.

By taking these steps, AI ethics moves from theory to practice. Implementing ethical AI ensures technology is trustworthy, fair, and beneficial—helping organizations innovate responsibly while protecting people and society.

Conclusion

Artificial intelligence is transforming the world at an unprecedented pace, offering opportunities to improve healthcare, education, business, and everyday life. However, with these opportunities come significant ethical responsibilities. Without careful oversight, AI can cause bias, invade privacy, displace jobs, and even spread misinformation.

AI ethics provides the framework to address these challenges. By focusing on fairness, transparency, accountability, and human-centered design, we can build systems that are not only effective but also trustworthy and equitable. Ethical principles guide developers, organizations, and policymakers to make decisions that prioritize society’s well-being alongside innovation.

Global governance and collaboration are essential for ensuring that AI serves everyone fairly, while strategies such as monitoring, diverse data, and stakeholder engagement help create responsible AI in practice. As technology evolves, ethical considerations must evolve with it, ensuring AI remains a tool for good.

In short, AI ethics is not just a set of guidelines—it is the foundation for a future where artificial intelligence enhances human life responsibly, safely, and inclusively. The choices we make today will shape the impact of AI for generations to come.